There's a subtle hum in our homes, a constant, almost imperceptible whisper of technology that has woven itself into the fabric of our daily lives. From the moment our smart alarm nudges us awake, through our morning coffee brewed by a connected machine, to the evening wind-down with a streaming service on a smart TV, we are surrounded by devices designed to anticipate our needs, simplify our routines, and, in many cases, listen. This isn't a dystopian fantasy plucked from a forgotten sci-fi novel; this is our reality, a landscape where convenience is king, and privacy, it seems, is merely a jester, often overlooked or actively sacrificed at the altar of innovation. For years, as a journalist deeply embedded in the labyrinthine world of cybersecurity and online privacy, I’ve watched this silent revolution unfold, often with a growing sense of unease, realizing that the very tools we embrace for their intelligence might just be the architects of our deepest privacy nightmare.

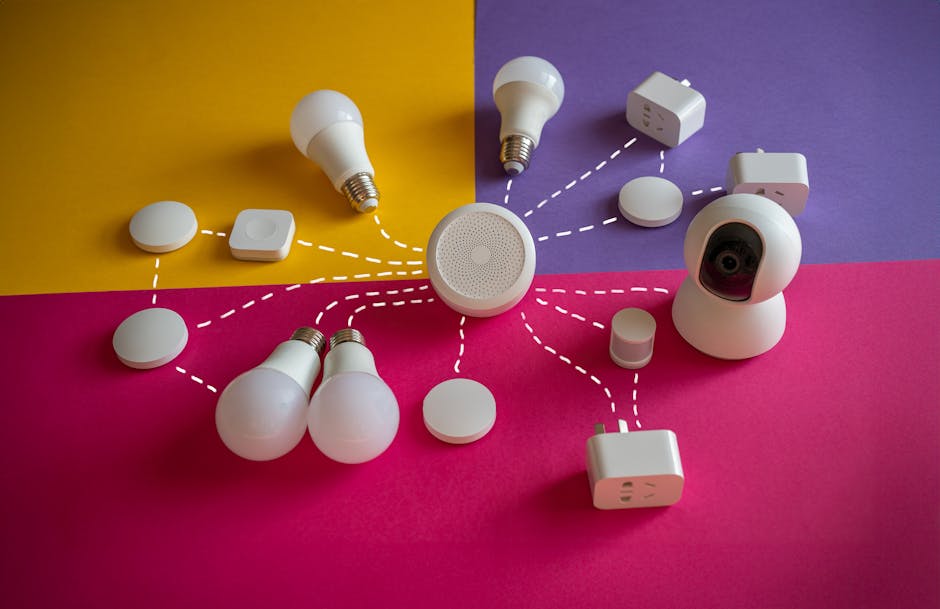

The rise of artificial intelligence has been nothing short of meteoric, transforming everything from medical diagnostics to autonomous vehicles, and crucially, embedding itself deeply within the smart devices that populate our homes and pockets. What began with simple voice commands to play music or check the weather has rapidly evolved into complex ecosystems capable of recognizing faces, predicting habits, monitoring health, and even discerning emotional states. This isn't just about a smart speaker occasionally misunderstanding a command; it's about an intricate web of sensors, microphones, and cameras constantly collecting, processing, and transmitting an unprecedented volume of personal data. The sheer scale and sophistication of this data harvesting are staggering, creating a digital shadow of our lives that is far more detailed and comprehensive than most of us could ever imagine, and it’s happening right under our noses, often with our unwitting consent embedded deep within layers of legalese.

The Unsettling Symphony of Silent Surveillance

Imagine for a moment that every casual conversation you have within the confines of your own home, every spontaneous thought uttered aloud, every private moment shared with a loved one, is not entirely private. This isn't a far-fetched scenario for those living with an array of smart devices. Our smart speakers, like Amazon's Alexa or Google Assistant, are designed to respond to a "wake word," a specific trigger phrase that activates their recording and processing capabilities. However, the mechanism isn't as simple as an on-off switch. These devices are continuously listening, buffering snippets of audio, constantly analyzing the soundscape for that magic phrase. While manufacturers assure us that these buffered recordings are ephemeral and deleted if the wake word isn't detected, the very act of constant acoustic monitoring raises profound questions about the sanctity of our personal spaces and the boundaries of digital intrusion.

The reality is that these devices are not just passively waiting for a command; they are actively processing the environment. This always-on listening capability, while technically necessary for their core function, creates a persistent vulnerability. We’ve seen numerous instances where these devices have mistakenly activated, recording private conversations and sometimes even sending them to unintended recipients or storing them on cloud servers for analysis. Remember the 2018 incident where an Amazon Alexa device in Portland, Oregon, recorded a private conversation between a couple and then sent it to an acquaintance in their contact list? This wasn't a malicious hack; it was a series of unfortunate misinterpretations by the AI, highlighting the precarious nature of relying on algorithms to safeguard our most intimate moments. The convenience these devices offer is undeniable, but the trade-off, often unquantified and poorly understood, is a continuous erosion of the expectation of privacy within our own four walls.

Beyond the well-known smart speakers, an entire ecosystem of smart home devices contributes to this intricate web of surveillance. Smart TVs, for instance, often come equipped with built-in microphones and even cameras, ostensibly for voice control or video conferencing. Brands like Vizio and Samsung have faced scrutiny, and even legal action, for collecting viewing habits and other data from their smart TVs without explicit, clear consent. Imagine your television not just displaying content but also analyzing your reactions, your presence in the room, and even the ambient sounds. Smart security cameras, while providing peace of mind, also present a double-edged sword, constantly capturing video and audio, often uploading it to the cloud. Baby monitors, once simple audio devices, now feature high-definition cameras, motion sensors, and even AI-powered analytics to detect crying or movement, creating a continuous feed of data from the most vulnerable spaces in our homes. Each of these devices, individually, might seem innocuous, but when aggregated, they paint an incredibly detailed and often unsettling picture of our lives, our habits, and our private moments.

The Pervasive Reach of Embedded Sensors

It's not just microphones that are the silent sentinels of our smart homes; a vast array of embedded sensors quietly collects an astonishing amount of data. Our smartphones, the ultimate smart device, are veritable treasure troves of personal information, packed with accelerometers, gyroscopes, magnetometers, barometers, proximity sensors, ambient light sensors, and, of course, GPS. These sensors don't just enable cool features; they continuously feed data streams that, when combined, can reveal incredibly intimate details about our lives. Your phone knows not just where you are, but how fast you're moving, whether you're climbing stairs, sitting still, or even which direction you're facing. This granular data, often collected in the background by various apps, forms the backbone of highly sophisticated user profiles, far beyond simple demographic information.

"The greatest threat to privacy is often convenience. We willingly trade our data for services that simplify our lives, without fully understanding the long-term implications of that exchange." — Dr. Helen Nissenbaum, Professor of Information Science, Cornell Tech.

Smart thermostats, like those from Nest or Ecobee, learn your temperature preferences, your daily routines, when you're home, and when you're away. Smart lighting systems can track your presence in rooms, your sleep patterns if integrated with other sensors, and even your preferred ambiance at different times of the day. Wearable fitness trackers and smartwatches monitor heart rates, sleep cycles, activity levels, and even blood oxygen saturation. This health data, often considered highly sensitive, is continuously uploaded to cloud servers, where it's analyzed by AI algorithms to provide personalized insights. While the immediate benefit is often improved health and wellness, the broader implications of having such intimate physiological data aggregated and stored by third-party companies are immense, touching upon everything from insurance premiums to employment opportunities, creating a potential minefield of discrimination and targeted exploitation. The sheer volume and variety of data points collected by these interconnected devices paint an ever-clearer picture of who we are, what we do, and even how we feel, all without us ever explicitly hitting a "record" button.

The context and background of this burgeoning AI privacy nightmare are rooted in a technological paradigm shift that prioritizes data collection above almost all else. The internet of things (IoT) promises a world where every device is connected, intelligent, and responsive to our needs. This intelligence, however, is fueled by data—vast, continuous streams of information about our environments, our behaviors, and our very selves. Companies argue that this data is essential for improving services, personalizing experiences, and developing new features. And to a certain extent, they're not wrong; AI models thrive on data. But the line between necessary data collection for functionality and excessive data harvesting for profit has become dangerously blurred. The value proposition is simple: trade a piece of your privacy for a piece of convenience. But for many, the true cost of that exchange remains hidden, obscured by complex terms of service and opaque data practices, leaving us vulnerable to an unseen digital architecture that is always listening, always watching, and always learning.