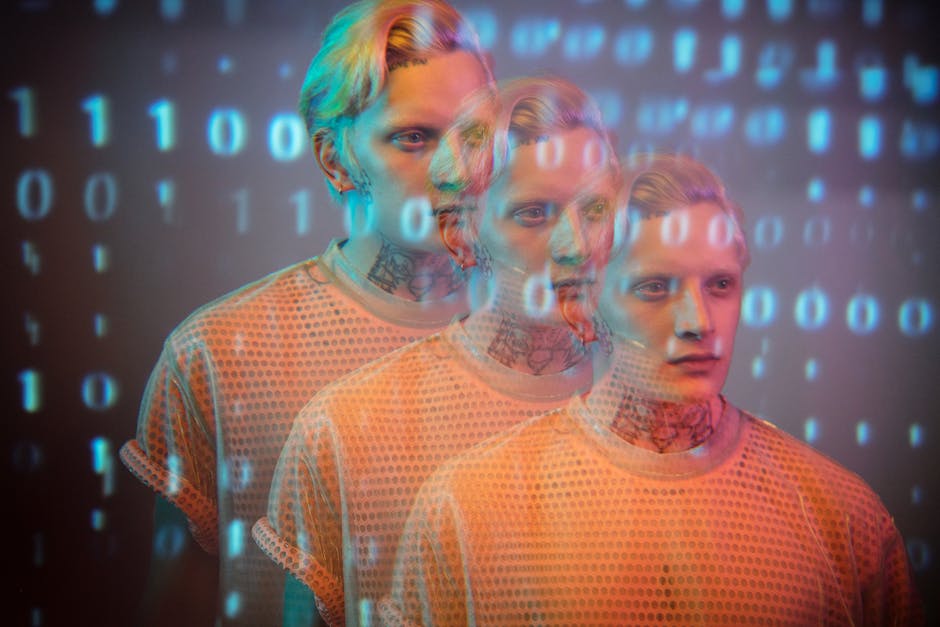

Imagine waking up one morning to find your deepest fears, your most private thoughts, and your most nuanced preferences not only known but actively anticipated by an unseen entity. This isn't the plot of a dystopian sci-fi novel; it's the chilling reality unfolding as artificial intelligence, powered by an insatiable hunger for data, constructs an increasingly sophisticated and eerily accurate digital clone of you. We're not talking about a simple online profile with your demographic details and a few liked pages; we're talking about an algorithmic doppelgänger, a synthetic representation of your very essence, capable of predicting your next click, your next purchase, perhaps even your next political leaning, with unnerving precision. This isn't some distant future threat; the AI privacy nightmare is already here, lurking in the algorithms that shape our daily digital lives, and the question is no longer *if* your digital clone exists, but *how* it’s already being used against you.

For years, those of us knee-deep in the cybersecurity and online privacy trenches have been sounding the alarm about data collection. We’ve warned about cookies, tracking pixels, behavioral advertising, and the insidious erosion of anonymity. But what we’re witnessing now is a quantum leap beyond mere data profiling. AI doesn't just collect data points; it synthesizes them, identifies patterns imperceptible to the human eye, and crafts a dynamic, evolving model of your personality, your vulnerabilities, and your potential responses to various stimuli. This digital double, born from the vast ocean of your online interactions – every search query, every social media post, every article read, every video watched, every location ping – is becoming a potent tool, often deployed without your explicit knowledge or consent, to influence, manipulate, and ultimately, control aspects of your life in ways we are only just beginning to comprehend.

The Unseen Architects of Your Online Self

From the moment you first ventured onto the internet, a silent, relentless process of data aggregation began. Every click, every scroll, every interaction with a website, an app, or a smart device, leaves a tiny digital crumb. Individually, these crumbs might seem insignificant, but when amassed by the billions and fed into the voracious maw of artificial intelligence, they become the building blocks for an incredibly detailed mosaic of your existence. Think about it: the specific way you phrase a Google search for medical advice, the subtle emotional cues in your social media comments, the time of day you check your banking app, the routes you take using GPS navigation, even the cadence of your voice when interacting with a smart assistant – all of it is grist for the AI mill. These aren't just data points; they're behavioral indicators, psychological tells, and lifestyle markers that, when analyzed by sophisticated algorithms, paint a picture far more intimate than you might ever reveal to your closest friends.

The sheer volume and variety of data now being collected are staggering. It goes far beyond the obvious. Your smart TV isn't just showing you Netflix; it's logging what you watch, when you watch it, and potentially even who is in the room through its embedded microphone and camera (if enabled, of course). Your fitness tracker isn't just counting steps; it’s mapping your sleep cycles, heart rate, and activity patterns, revealing insights into your health and habits. Even your car, increasingly a connected device on wheels, is collecting data on your driving style, your destinations, and potentially your conversations. All these disparate streams of information are then cross-referenced, correlated, and analyzed by AI systems designed to find connections and predict behaviors. This multi-dimensional data capture allows AI to construct a holistic view of your life, enabling it to understand your routines, anticipate your needs, and even infer your emotional state with an unsettling degree of accuracy. It's like having thousands of invisible scribes meticulously documenting every facet of your being, and then handing those notes over to a super-intelligent psychologist.

This relentless data aggregation is driven by an economic imperative. In the digital age, data is the new oil, and AI is the refinery. Companies, from tech giants to niche advertisers, are in a fierce competition to understand consumers better than anyone else. The promise of hyper-personalized experiences, more effective advertising, and optimized services fuels this data collection frenzy. But the line between personalization and pervasive surveillance is becoming increasingly blurred. What starts as an innocent attempt to recommend a product you might like quickly morphs into an AI system that knows your financial vulnerabilities, your political leanings, and your susceptibility to certain types of messaging. This isn't just about showing you relevant ads; it's about building a comprehensive profile that can be leveraged for a multitude of purposes, some benign, many deeply concerning. The unseen architects are not just building a profile; they are building a predictive model of *you*.

Beyond the Profile Picture The Birth of Your AI Doppelgänger

When we talk about a "digital clone" or "AI doppelgänger," it's crucial to understand this concept extends far beyond a static collection of data points. Think of it not as a dossier, but as a living, breathing, albeit virtual, entity. This AI clone is a dynamic, constantly updated simulation of you, engineered to react and behave as you would in various hypothetical scenarios. It learns from every new piece of data, refining its understanding of your personality, your decision-making processes, and your emotional triggers. It's a predictive model so advanced that it can essentially "think" like you, anticipate your responses, and even generate content or interactions in your simulated voice or style. This isn't science fiction; it’s the bleeding edge of AI, where synthetic identity creation is becoming frighteningly sophisticated.

Consider the implications: an AI clone could be used to test new marketing campaigns, to see which messages you, specifically, are most likely to respond to, before they are even shown to you. It could simulate your reactions to political narratives, helping campaigns craft micro-targeted propaganda designed to sway your vote. Imagine an AI chatbot trained on your entire digital communication history – your emails, texts, social media posts – capable of holding a conversation with someone, mimicking your linguistic style, your opinions, and even your emotional nuances, making it virtually indistinguishable from the real you. We've seen early glimpses of this with deepfake technology for video and audio, but the true digital clone goes much deeper, replicating your cognitive and behavioral patterns, not just your superficial appearance. This is where the concept moves from simple data analysis to true synthetic identity.

"The ultimate goal of many AI systems is not just to understand human behavior, but to predict and, ultimately, influence it. Your digital clone is the most powerful tool in achieving that influence." – Dr. Evelyn Reed, AI Ethicist and Privacy Advocate.

This evolving digital double raises profound questions about identity, consent, and autonomy. If an AI can accurately predict your choices and even simulate your responses, where does your free will begin and end? Who owns this digital clone? Is it you, the source of the data, or the entities that collected and processed it? The birth of your AI doppelgänger isn't just a technological marvel; it's a philosophical challenge to what it means to be an individual in an increasingly data-driven world. It's a stark reminder that the lines between the physical self and the digital self are blurring at an alarming rate, and the implications for our privacy and personal agency are nothing short of monumental. We are entering an era where our digital reflections might be used in ways we can't foresee, against our best interests, and without any real recourse.