AI Predicts Your Future Decisions and Manipulates Them

If the concept of Big Tech knowing your past and present wasn't unsettling enough, consider the truly chilling reality that their sophisticated artificial intelligence and machine learning algorithms are designed not just to understand who you are, but to predict what you will do next, and even more profoundly, to subtly influence those future decisions. This isn't science fiction; it's the cutting edge of predictive analytics, where vast datasets are fed into complex models that identify patterns and correlations far beyond human comprehension. These algorithms can forecast everything from your next purchase, your likelihood to switch political parties, your potential to develop certain health conditions, or even your propensity to engage with specific types of content. The power here lies in their ability to anticipate your needs, desires, and vulnerabilities before you might even consciously recognize them yourself, allowing for a level of personalized influence that is both pervasive and incredibly effective.

Think about the recommendations you receive on streaming services, e-commerce sites, or social media feeds. These aren't random suggestions; they are the output of highly sophisticated predictive models designed to keep you engaged and spending. Netflix, for instance, uses an intricate algorithm that analyzes your viewing history, the time you spend on certain genres, what you skip, and even the devices you use, to recommend shows and movies it believes you are most likely to watch. Amazon similarly predicts what products you might buy next, often before you've even considered them. While these examples might seem benign, the underlying technology has far more profound implications. It's being used to determine who gets offered a loan, who sees certain job advertisements, who is deemed a flight risk by insurance companies, and even who might be more susceptible to misinformation. By predicting our future actions, these systems can then strategically present information or options that steer us towards a desired outcome, often one that benefits the platform or its advertisers, rather than our own best interests.

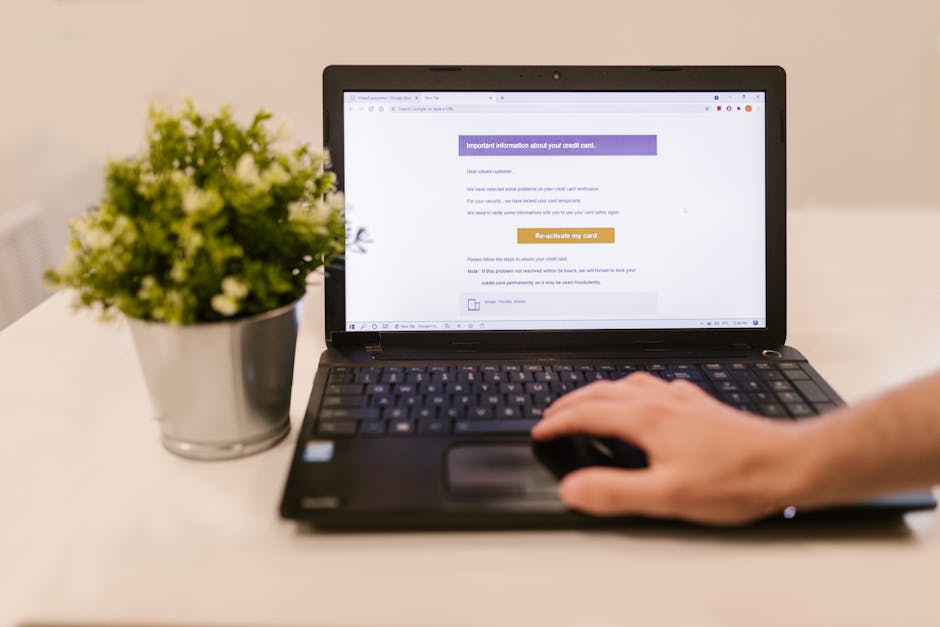

The manipulation aspect comes into play when these predictions are used to curate your digital environment in a way that actively shapes your behavior. If an algorithm predicts you are likely to be swayed by a particular political message, it can ensure you see more of that content, subtly reinforcing a viewpoint. If it identifies you as someone prone to impulsive purchases, it might show you time-sensitive deals or emotionally resonant ads. This isn't always overt; it's often a gradual, almost imperceptible nudging, delivered through personalized news feeds, curated search results, and targeted advertisements. The danger here is twofold: firstly, it can create echo chambers, limiting your exposure to diverse perspectives and reinforcing existing biases. Secondly, it undermines genuine free will by constantly pre-filtering and pre-selecting the information you encounter, making it harder to make truly independent decisions. The invisible profile, therefore, becomes a tool for not just understanding you, but for actively constructing your reality, and in doing so, shaping your future.

Your "Private" Conversations Aren't Always So

In an age where nearly all communication has moved online, the concept of a truly private conversation feels increasingly elusive. We often comfort ourselves with the idea of end-to-end encryption, believing it shields our messages and calls from prying eyes. While end-to-end encryption is a vital security feature that protects the *content* of our communications from being read by intermediaries, it's crucial to understand its limitations. It doesn't, for instance, protect the *metadata* of your conversations – who you called, when, for how long, and sometimes even your location during the call. This metadata alone can paint an incredibly detailed picture of your social network, your habits, and your routines. A government agency or a data broker might not know the exact words you exchanged, but they can infer a tremendous amount from the patterns of your communication, identifying significant relationships, periods of activity, and even your movements throughout the day, all without ever breaking encryption. It's like knowing who sent a letter, when it was sent, and to whom, even if you can't read the letter's contents.

Beyond metadata, the rise of voice assistants like Amazon Alexa, Google Assistant, and Apple Siri introduces another layer of potential privacy compromise. These devices are designed to be "always listening" for their wake words, and while companies assure us that recordings are only sent to their servers after the wake word is detected, there have been numerous instances where false positives or accidental activations have led to snippets of private conversations being recorded and, in some cases, even reviewed by human contractors. While companies claim these human reviews are for improving AI accuracy and are anonymized, the very act of a private conversation being captured and potentially analyzed, even by a limited human audience, erodes the sense of personal sanctity. The convenience of hands-free interaction comes with the implicit risk that your most intimate moments might not be as private as you assume, transforming your living room into a potential data collection point for corporate giants.

Furthermore, the permissions we grant to mobile apps often create gaping holes in our perceived privacy. How many times have you clicked "Allow" without truly scrutinizing why a flashlight app needs access to your microphone, camera, or contact list? These seemingly innocuous permissions can allow apps to collect audio recordings, visual data, and network information in the background, often for purposes unrelated to the app's primary function. These data points are then fed into the larger data ecosystem, contributing to your invisible profile. There have been documented cases where apps, purportedly for something as simple as weather forecasting or games, were found to be covertly collecting extensive personal data, including device identifiers, location history, and even call logs, then selling this information to third-party data brokers. The line between necessary functionality and excessive data harvesting is constantly blurred, and our casual acceptance of these permissions effectively turns our personal devices into sophisticated, always-on surveillance tools for the companies behind the apps. Our digital whispers, once thought to be ephemeral, are increasingly being captured, analyzed, and integrated into the ever-growing dossier that defines our digital selves.

Even When You Delete, It's Often Still There

One of the most persistent and comforting myths of the digital age is the idea that when you click "delete," your data is truly gone, vanished into the ether. Unfortunately, this is often an illusion, a digital sleight of hand designed to provide a false sense of control. For many Big Tech companies, "deleting" something – be it a photo, a post, or even an entire account – often means little more than removing it from public view or de-linking it from your active profile. The data itself, however, can persist on their servers, in their backups, or within their vast, distributed data centers for extended periods, sometimes indefinitely. This is often justified under the guise of "business purposes," "legal compliance," or "improving services," but for the individual, it means that traces of their past digital lives remain accessible, despite their best efforts to erase them. It's like tearing up a photograph but knowing copies still exist in countless albums and archives you can't access.

The reasons for this data retention are varied and complex. Companies often maintain extensive backups for disaster recovery, meaning that even if something is deleted from the primary database, it might still exist in an older backup snapshot. Furthermore, regulatory requirements in different jurisdictions can mandate that certain types of data be kept for specific periods. However, a significant driver is simply the intrinsic value of the data itself. Every piece of information contributes to the invisible profile, and companies are loath to relinquish something that could be valuable for future analytics, advertising, or product development. This means that even if you delete a controversial tweet from five years ago, it could still be present in a company's internal archives, potentially resurfacing in a data breach or being accessed for internal analysis. The "right to be forgotten" provisions, such as those under Europe's GDPR, offer some legal recourse, but their application is often limited to specific geographic regions and can be challenging to enforce, especially when data has been widely shared with third parties.

The concept of "dark patterns" further complicates the illusion of deletion. These are user interface designs that intentionally trick or nudge users into making choices they might not otherwise make, often to the benefit of the company. When it comes to privacy, dark patterns manifest as convoluted privacy settings, difficult-to-find deletion options, or misleading language that makes it seem like you're deleting data when you're only making it less visible. For example, a platform might make it incredibly easy to upload content but require a multi-step, confusing process to truly remove it, or present an option to "deactivate" an account rather than "delete" it, with deactivation simply pausing data collection while retaining all historical information. This deliberate obfuscation creates a labyrinthine experience for anyone genuinely trying to reclaim their data, leading to frustration and often, resignation. The chilling reality is that in the digital realm, deletion is often a promise unfulfilled, leaving a permanent, indelible record of our digital existence, a ghost in the machine that continues to inform and shape our invisible profile long after we believe it’s gone.