The hum of our digital lives has grown into a symphony, or perhaps a cacophony, of data. Every click, every search, every spoken command into a smart device now resonates with an intensity we’re only just beginning to grasp. For years, we’ve talked about data as the new oil, a valuable commodity extracted from our daily interactions, refined, and sold to the highest bidder. But the analogy feels quaint, almost nostalgic, in the face of what artificial intelligence is doing to that data. AI isn't just refining oil; it’s building sentient machines out of it, machines that learn, infer, and predict with an unnerving accuracy, and often, without our explicit consent or even our awareness.

We stand at a precipice, a moment where the convenience and awe-inspiring capabilities of AI collide head-on with our fundamental right to privacy. The algorithms that power our favorite services, from personalized recommendations to predictive text, are becoming increasingly sophisticated, drawing connections between disparate pieces of information about us that even we might not consciously make. This isn't science fiction anymore; it’s the mundane reality of our interconnected world, where every interaction leaves a digital crumb that AI is only too eager to gobble up. The stakes are higher than ever, not just for our marketing profiles, but for our autonomy, our choices, and ultimately, our very sense of self in an increasingly surveilled landscape.

The Invisible Gaze of Algorithmic Intelligence

Remember when privacy concerns largely revolved around corporations selling your email address to spammers? Ah, simpler times. Today, the landscape is infinitely more complex, painted with the broad strokes of artificial intelligence. AI doesn't just collect data; it interprets, analyzes, and learns from it at a scale and speed that human beings simply cannot replicate. It finds patterns in your purchasing habits that suggest your political leanings, infers your health status from your search queries, and even deduces your emotional state from the tone of your voice or the expressions on your face in a video call. This isn't just about targeted ads anymore; it's about building comprehensive, dynamic profiles of every individual, profiles that can be used for everything from personalized healthcare to social credit systems, depending on who holds the keys to the algorithms.

The sheer volume of data we generate daily is staggering, a veritable Niagara Falls of digital exhaust. Every time you unlock your phone, scroll through a social feed, ask a voice assistant a question, or even drive a modern car, you're contributing to this deluge. And AI, with its insatiable appetite for information, is the ultimate beneficiary. It doesn't just passively observe; it actively seeks out, synthesizes, and makes inferences, creating a shadow version of you that exists solely in the digital realm. This digital doppelgänger, crafted from your data, can be more predictable, more exploitable, and in some ways, more 'known' to the algorithms than you are to yourself. It’s a chilling thought, isn't it? That an unseen entity might understand your desires, fears, and vulnerabilities better than your closest friends or family.

Consider the implications. If AI can predict your next purchase with 80% accuracy, what about your next political vote? Or your likelihood to default on a loan? Or even your propensity for certain health conditions? The potential for beneficial applications, like early disease detection or personalized education, is undeniable and often touted by developers. But the darker side, the potential for manipulation, discrimination, and the erosion of free will, looms large. We’re talking about systems that can nudge our decisions, subtly influence our opinions, and even pre-empt our actions based on predictive models built from our most intimate data points. This isn't just about preventing spam; it's about safeguarding the very essence of human agency in an age of ubiquitous, intelligent systems.

The Silent Data Harvesters Among Us

Many of us have grown accustomed to the idea that our data is being collected. We click "Agree" to terms and conditions without a second thought, eager to access the latest app or service. But the scale and sophistication of this collection have fundamentally changed with the rise of AI. It’s no longer just about explicit data you provide, like your name and email. It's about implicit data: the cadence of your typing, the way you swipe on your phone, the duration of your gaze at certain content, the tone of your voice, the background noise in your home. These seemingly innocuous data points, when fed into powerful AI models, become incredibly revealing, painting a nuanced picture of your habits, preferences, and even your emotional state.

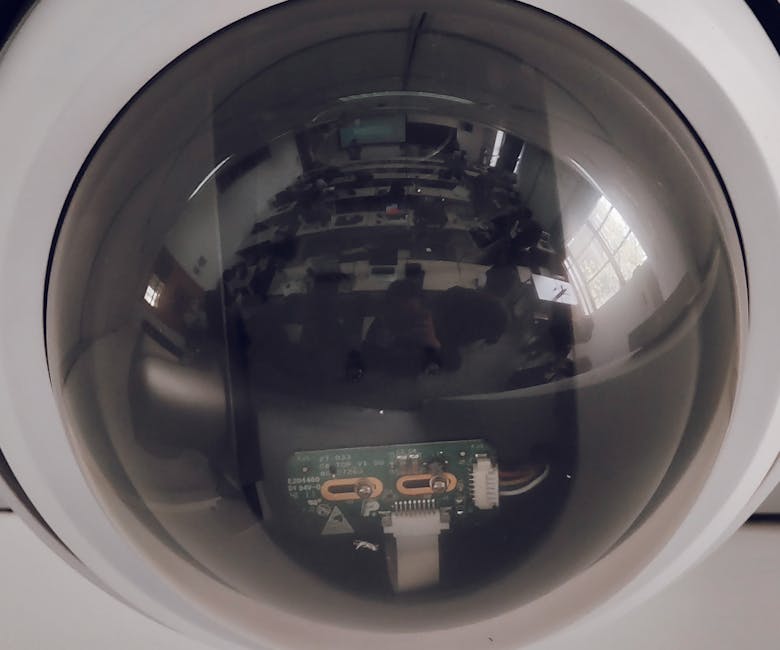

The problem is exacerbated by the fact that many AI-powered services are designed to be opaque. We don't see the algorithms at work, we don't understand the complex decision trees they navigate, and we certainly don't get a say in how our inferred profiles are used or shared. This lack of transparency creates an inherent power imbalance, where the companies developing and deploying AI systems hold all the cards, and individuals are left largely in the dark, struggling to understand the mechanisms by which their digital identities are being constructed and leveraged. It’s like being a character in a play where you don't know the script, the director, or even the audience, yet your every move is being meticulously observed and analyzed.

"Data is not just information; it's a reflection of our lives, our choices, our very being. When AI consumes this data, it consumes a part of us, and without proper safeguards, that consumption can lead to unforeseen consequences for individual liberty and societal fairness." – Dr. Anya Sharma, Digital Ethics Researcher.

This isn't about being paranoid; it's about being pragmatic. The rapid advancement of AI demands a corresponding evolution in our understanding of privacy and our proactive efforts to protect it. We can no longer afford to be passive participants in this digital revolution. The time for casual indifference to our privacy settings has passed. The algorithms are learning, watching, and waiting, and if we don't take deliberate steps to manage our digital footprint, our data will indeed become their next, and perhaps most valuable, meal. This article isn't just a warning; it’s a call to action, a guide to empowering yourself in an age where your personal information is the most sought-after commodity.

So, what can we do? The answer isn't to retreat from technology, which is often impractical and undesirable. Instead, it’s about becoming more informed, more deliberate, and more empowered users of the digital world. It means understanding where our data goes, how it's used, and, crucially, how to reclaim some semblance of control over it. We're going to dive deep into five critical areas where AI is particularly voracious, and I'll walk you through the essential privacy settings you absolutely must change. This isn't just a tech tutorial; it's a necessary intervention for your digital future, a way to build a stronger firewall around your personal identity against the ever-expanding gaze of artificial intelligence.