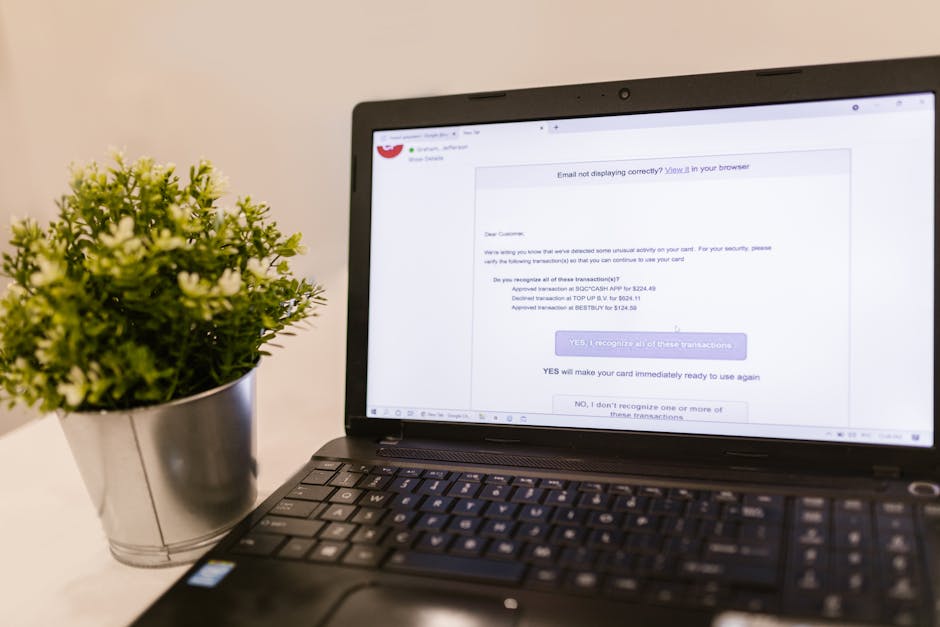

Remember that email from your "bank" asking you to verify your account details, the one with the slightly off logo and a few glaring grammatical errors? Or perhaps the urgent message from a "long-lost relative" promising a fortune, riddled with awkward phrasing that immediately screamed scam? For years, these clumsy attempts at digital deception have been our primary adversaries in the online world, often easily identifiable by a discerning eye or a quick Google search. We’ve become rather good at spotting the red flags, haven't we? We've learned to scrutinize sender addresses, hover over suspicious links, and laugh at the sheer audacity of some of these phishing attempts, confident in our ability to navigate the treacherous waters of the internet.

But what if those tell-tale signs vanished? What if the grammar was flawless, the tone perfectly matched the supposed sender, and the urgency felt genuinely legitimate, crafted with an almost uncanny understanding of your personal situation and professional obligations? Imagine an email from your CEO, impeccably worded, referencing a recent company event, and requesting an immediate, seemingly innocuous action that, in hindsight, opens the floodgates to financial ruin or data breaches. This isn't a hypothetical scenario from a dystopian sci-fi novel anymore; it's the chilling reality emerging from the rapid advancements in artificial intelligence. We are truly entering an era where the digital attacks are so sophisticated, so personalized, and so utterly convincing that you genuinely won't see them coming, making our traditional defenses feel like trying to stop a bullet with a paper shield.

The Dawn of Hyper-Realistic Digital Deception

For decades, phishing has remained a stubbornly effective attack vector, largely because it exploits the most vulnerable component in any security architecture: the human being. Whether it’s through email, text messages (smishing), or voice calls (vishing), attackers have consistently leveraged social engineering tactics to manipulate individuals into revealing sensitive information, clicking malicious links, or transferring funds. The success rate, despite widespread awareness campaigns, has been alarmingly high, often attributed to human error, distraction, or simply the overwhelming volume of digital communications we all receive daily. But the advent of generative AI tools, particularly large language models (LLMs) like GPT-4 and others, has fundamentally reshaped this landscape, transforming crude, mass-produced scams into highly targeted, bespoke operations that are almost impossible to distinguish from legitimate communications.

The core problem with traditional phishing was its scalability versus its quality. Attackers could send millions of emails, but each one was a generic, often poorly translated, one-size-fits-all attempt. The sheer volume was the strategy, hoping a small percentage would fall for it. Generative AI flips this script entirely, allowing threat actors to produce an unprecedented volume of high-quality, contextually relevant, and grammatically perfect communications with minimal effort. This capability enables a new breed of highly personalized spear-phishing attacks that can bypass even the most vigilant human scrutiny, exploiting our inherent trust in familiar communication patterns and our reliance on digital correspondence for both professional and personal matters. It's no longer about volume over quality; it's about achieving both simultaneously, at scale, making the adversary incredibly potent.

Consider the sheer power of an AI that can analyze publicly available information about you – your LinkedIn profile, company website, social media posts, news articles you’ve shared – and then craft a perfectly tailored narrative. It can mimic the writing style of your colleagues, understand the jargon specific to your industry, and even reference recent events relevant to your organization or personal life. This level of contextual awareness and linguistic prowess was previously the domain of highly skilled, patient, and expensive human social engineers. Now, it's accessible to anyone with an internet connection and a modicum of technical savvy, democratizing advanced attack capabilities and lowering the barrier to entry for cybercriminals across the globe. The implications for individuals and organizations are nothing short of profound, demanding a complete re-evaluation of our cybersecurity strategies.

When Machines Become Master Storytellers of Deceit

The true danger of AI in phishing lies in its ability to generate compelling narratives that exploit human psychology with pinpoint accuracy. These aren't just emails with good grammar; they are sophisticated psychological operations designed to elicit a specific emotional response – urgency, fear, curiosity, or even helpfulness – that bypasses critical thinking. An AI can be prompted to create an email demanding immediate action due to a "critical security vulnerability" detected on your network, using language that mirrors official IT communications. It can simulate a desperate plea from a "friend" in distress, requesting a quick money transfer, filled with details gleaned from their social media activity that make the story painfully plausible. The machine learns what works, adapts its messaging, and refines its approach with each interaction, becoming an increasingly formidable opponent.

One of the most insidious aspects of AI-driven phishing is its capacity for rapid iteration and A/B testing on a massive scale. A human attacker might try a few different subject lines or body paragraphs, but an AI can generate thousands of variations, test them against simulated targets, and quickly identify which combinations yield the highest click-through rates or successful credential harvesting. This iterative improvement means that defensive measures, once they catch up to one wave of attacks, will immediately face a new, more refined, and harder-to-detect variant. It’s a constant arms race, but with AI on the offensive side, the speed and adaptability of the attacks have accelerated exponentially, making it incredibly challenging for human defenders and even traditional security tools to keep pace. We are essentially fighting a ghost that can change its form at will, learning and evolving with every attempt to pin it down.

"The era of easily spotted phishing emails is rapidly fading. AI doesn't just write better English; it understands context, intent, and human psychology. It's like having a master illusionist crafting every single scam, making the impossible seem perfectly real." - Dr. Evelyn Reed, Cybersecurity Ethicist.

Furthermore, the integration of AI extends beyond just text generation. Imagine an AI that can analyze your company’s internal communication patterns, learning the preferred channels, tone, and even specific phrases used by different departments or leadership figures. It could then generate an email that not only looks legitimate but feels inherently authentic, slipping past both automated email filters and human skepticism with alarming ease. This level of mimicry, driven by sophisticated machine learning algorithms, creates a scenario where the line between genuine and malicious communication becomes virtually indistinguishable, forcing us to fundamentally rethink how we verify digital interactions and establish trust in an increasingly uncertain online environment.